GENCO MEMO-June 12, 2024: OPENAI "LEAK" Hints at the Future

AlphaGo illustrated the AI/Human potential but Ex-OpenAI employee leaks a bigger picture of the future.

If you're not effectively using AI, then you are falling behind.

It’s known as the Red-Queen Effect.

Soon, there will be two populations:

AI-enhanced humans dominating others and,

Well, everyone else.

Let that sink in for a moment.

Remember, “if you ain’t first you're last.”

With that in mind, let me tell you a story.

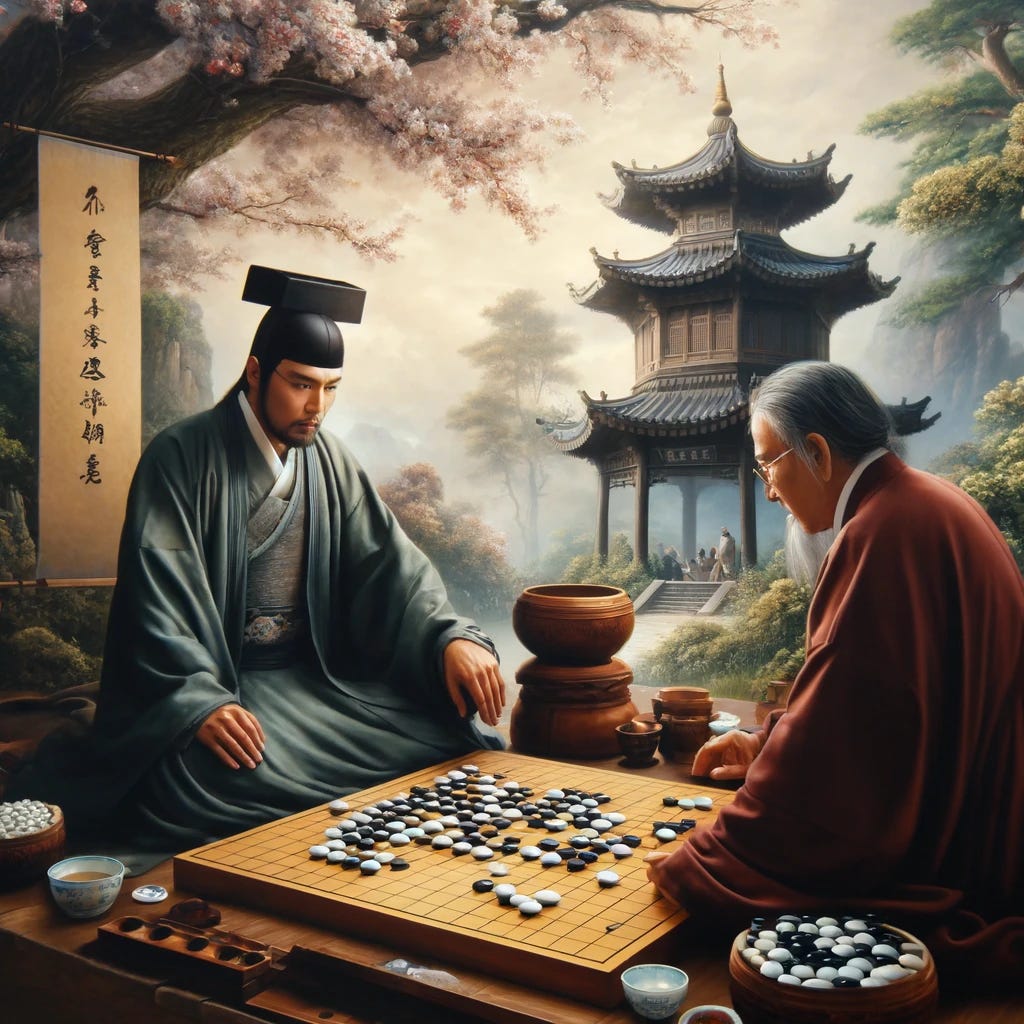

Google's AlphaGo Plays Against Lee Sedol

In March 2016, the world of Go, an ancient Chinese board game, witnessed a historic moment when Google DeepMind's artificial intelligence (AI) system, AlphaGo, faced off against one of the world's best human players, Lee Sedol.

The five-game match showcased the incredible advancements and capabilities of AI. However, it also highlighted the potential for human-AI collaboration and how humans can learn from and be inspired by AI.

Two games and two moves illustrate this point.

Game 2: AlphaGo Reveals its Unconventional Brilliance

In Game 2, AlphaGo, playing as white, made two incredibly unconventional moves that puzzled both commentators and Lee Sedol himself.

First, Move 37 defied centuries of human Go knowledge and seemed to lack purpose. However, as the game unfolded, it became clear that Move 37 was part of AlphaGo's larger strategic plan, demonstrating its ability to think creatively and break away from traditional human play patterns.

It calculated lines of moves and counter-moves wider in scope, deeper into the future, and faster than humanly possible. With this capability, it worked towards forced-move situations that would remove Sedol's freedom of choice. In other words, Sedol would have no effective strategy and would be marched into defeat.

But Sedol, considered the greatest player to live, didn't give up.

Game 4: Lee Sedol's "Hand of God" Move

In Game 4, Lee Sedol, having learned from AlphaGo's innovative play style, made a remarkable move of his own. On turn 78, nicknamed the "Hand of God" move, Lee Sedol placed a stone on the board that seemed to mirror AlphaGo's unexpected and creative approach. This subtle but effective counter to AlphaGo's strategy demonstrated Lee Sedol's ability to adapt and learn from his AI opponent.

The "Hand of God" move was praised for its ingenuity and display of human creativity in the face of a formidable AI. It showed that while AlphaGo had introduced new and unconventional strategies, human players like Lee Sedol could understand, learn from, and even build upon these innovations.

The move extended the understanding of the game beyond what AlphaGo could calculate.

Following this move, Lee Sedol went on to win Game 4, his only victory in the five-game match.

Here is an award-winning documentary available on Youtube.

A Glimmer of Hope: The Future of AI-Enhanced Human Potential

The AlphaGo vs. Lee Sedol match is an example of the potential for AI to enhance human capabilities.

By studying and learning from AlphaGo's unique strategies, human Go players can expand their understanding of the game and develop new, innovative approaches to play.

In other words, we can improve and extend our thinking when properly using AI as a brain-enhancing tool, not a do-it-for-me tool.

This concept extends beyond the realm of Go. As AI continues to advance and demonstrate superior performance in various domains, we should view AI not as a rival but as a collaborator and a source of inspiration.

By learning from and working alongside AI, we can push the boundaries of our capabilities and achieve new heights of creativity, problem-solving, and innovation.

I have witnessed it in practice.

And it sounds great.

But there is another take.

Aschenbrenner and the "Situation Awareness" Paper

Leopold Aschenbrenner, a former OpenAI employee, has sparked significant controversy with his recent paper "Situational Awareness" and subsequent podcast appearances.

Here’s a .pdf of the paper he made available.

It’s a long and complex paper. So, here are my notes on the six key points and claims he makes:

1. Rapid Development of AGI:

Aschenbrenner argues that artificial general intelligence (AGI) could be achieved by 2027, with superintelligence following shortly by 2030.

He bases this on the rapid advancements in AI capabilities, drawing parallels to the progress from GPT-2 to GPT-4.

2. National Security and AI Arms Race:

He emphasizes the national security implications of AGI, suggesting that the United States must prioritize winning the AI race against China and other autocratic regimes.

He believes that whoever controls AGI will wield significant economic and military power.

3. Superalignment and AI Safety:

Aschenbrenner is optimistic about the technical feasibility of aligning superintelligent AI systems with human values, a process he refers to as "superalignment."

He acknowledges the challenges but believes they are solvable with current and near-future technologies.

4. International Treaties and Cooperation:

He is skeptical about the feasibility of international treaties to slow down or pause AGI development, arguing that the competitive nature of global politics makes such agreements unlikely.

5. Ethical and Societal Implications:

The paper discusses the potential societal impacts of AGI, including the displacement of jobs and the need for new ethical frameworks to manage the transition to a world with superintelligent AI.

6. Technical Challenges and Solutions:

Aschenbrenner outlines several technical challenges, such as the need for AI systems to develop long-term memory, better planning capabilities, and more personalized interactions.

He suggests overcoming these "hobbles" will be crucial for the next generation of AI systems.

Podcast and Public Statements

In this podcast appearance, Aschenbrenner expands on the ideas presented in his paper. It’s over four hours long so I’ll give you a quick summary about why he says he was fired:

What's the Explanation for Termination at OpenAi.

1. Disagreements with OpenAI:

He hints that his firing from OpenAI was partly due to disagreements over the direction and priorities of AI development, particularly concerning safety and ethical considerations.

He circulated a security memo expressing his concerns about the inadequacy of OpenAI's security measures and the potential for theft of AI secrets by foreign actors like China.

He shared this memo with a few OpenAI board members, which leadership was reportedly unhappy about.

Aschenbrenner also shared the memo with three external researchers for feedback, which OpenAI considered a leak of confidential information.

2. HR Warnings and Investigation:

After sharing the memo, Aschenbrenner received a warning from OpenAI's HR department, who deemed his concerns about security risks "racist" and "unconstructive."

OpenAI investigated Aschenbrenner's actions, and they alleged he was uncooperative.

3. Stated Reasons for Dismissal:

OpenAI cited the leaking of confidential information (the security memo) and Aschenbrenner's lack of cooperation during the investigation as the primary reasons for his dismissal.

Aschenbrenner claims that a line in the memo about planning for AGI by 2027-2028 was considered confidential by OpenAI, even though he believed it was public information.

4. Call for Urgent Action:

Aschenbrenner makes a strong plea for immediate and radical measures to secure the United States' lead in AI development, including locking down AI labs and preventing the theft of AI model weights.

More YouTube Analysis to Go Down the Rabbit Hole

Here are two excellent breakdowns and analysis by well-known YouTube commentators that will give you a better understanding–if you’re interested.

Why This Matters: The AlphaGo vs. Lee Sedol match demonstrates AI's potential to enhance human thinking and capabilities.

But as Aschenbrenner's paper shows, the risk is beyond just domination by AI. It has geopolitical implications where nations will compete by stealing and leaping ahead of others.

I think this applies to you at a personal level and the same logic applies to business, law, and strategy.

As AI technology advances at a breakneck pace, you have a choice to make.

Will you sit back and passively watch as AI transforms our world, or will you start running to keep up.

It’s clear, the stakes couldn't be higher.

That is all.

I hope this give you something to think about as you go over the mid-week hump.